Monitaur leads the way in end-to-end AI and Machine Learning assurance. Our goal is to enable independently verifiable proof that your AI/ML systems are functioning responsibly and as expected.

Our cloud-based applications are SOC2 Type II certified to deliver comprehensive, user-friendly processes for your teams and entire AI/ML system lifecycles.

ML systems present a new paradigm of technology and business decisioning.

Individuals and organizations responsible for managing risk and compliance – including regulators – have not previously contended with such dynamic and variable models and systems.

Because machine learning is making key decisions that affect people's lives, livelihoods, and opportunities, trust and confidence in AI/ML systems is paramount. There should be a reasonable ability to evaluate and verify their safety, fairness, and compliance.

Companies, regulators, and consumers all benefit from an objective method of assuring ML systems.

ML models can behave unexpectedly, creating enormous liabilities and risk for unforeseen damages and reputational harm.

ML systems are opaque to non-technical professionals, requiring more ongoing attention from objective parties to ensure safety and compliance.

Unassured ML applications can lead to broad misconceptions and bias, undermining the long-term promise of the technology.

Three core pillars support an effective assurance and responsible use of AI/ML.

MLA requires clear understanding and documentation of the considerations, goals, and risks evaluated during the lifecycle of an ML application.

MLA requires every business and technical decision has the ability to be verified and interrogated.

MLA requires any ML application can be reasonably evaluated and understood by an objective individual or party not involved in the model development.

Creating assurance ML systems requires a continuous, coherent approach throughout the lifecycle of projects, as well as across your enterprise operations. Careful coordination of people, processes, and systems can create the clarity, confidence, and accountability that practitioners need.

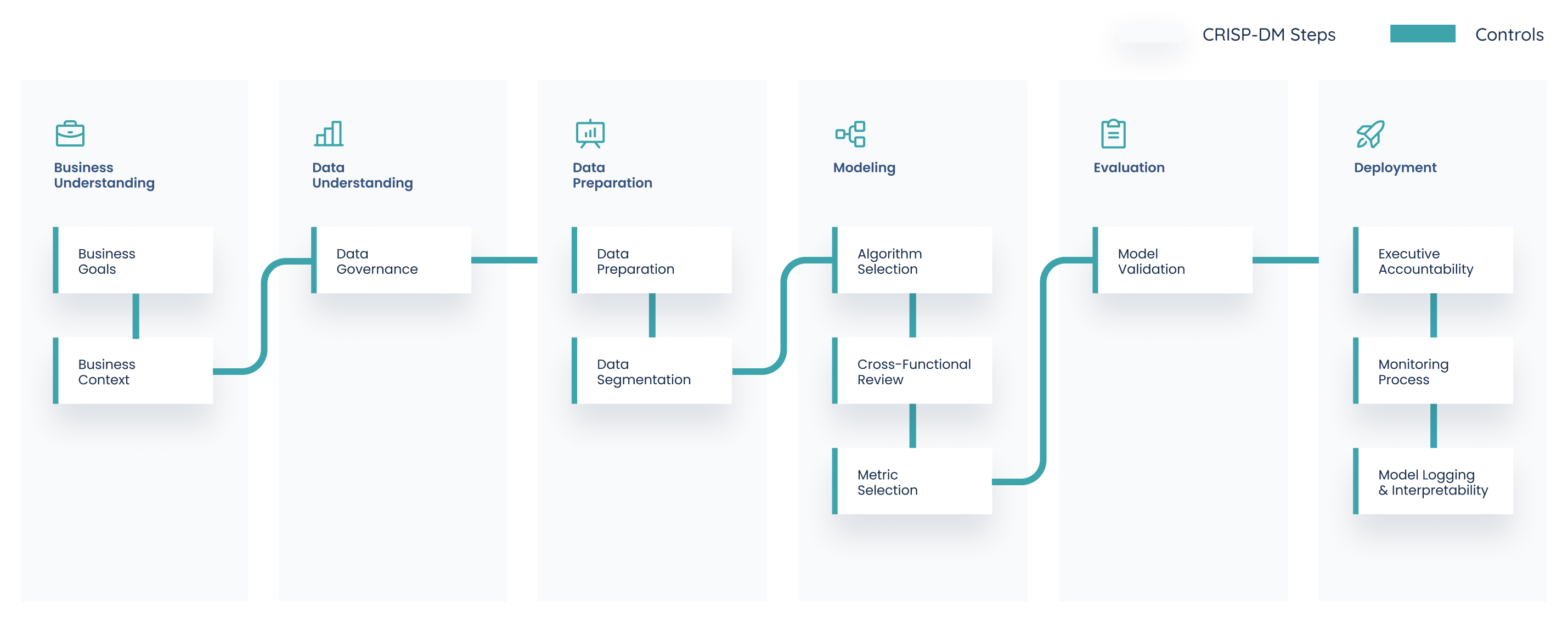

Organizations can utilize the established, effective CRISP-DM steps and deploy detective controls vital for machine learning systems to drive a powerful framework for assurance and robust risk/control matrices.

Evaluate key business drivers and questions at project inception and revisit throughout.

Business Goals

Business Context

Understand data lineage, quality, and usage rights.

Data Governance

Pre-process standardized data sets for training, test, and production.

Data Preparation

Data Segmentation

Create the simplest, best fit, and performant models.

Algorithm Selection

Cross-Functional Review

Metric Selection

Determine accuracy and precision of models before launch and continuously in production.

Model Validation

Deliver business sign-off and develop capacity for continuous inspection and review.

Executive Accountability

Monitoring Process

Model Logging & Interpretability